CompTIA Security + SY0-701

Q: 1

An engineer needs to find a solution that creates an added layer of security by preventing

unauthorized access to internal company resources. Which of the following would be the best

solution?

Options

Q: 2

Which of the following is the most relevant reason a DPO would develop a data inventory?

Options

Q: 3

While conducting a business continuity tabletop exercise, the security team becomes concerned by

potential impacts if a generator fails during failover. Which of the following is the team most likely to

consider in regard to risk management activities?

Options

Q: 4

A company receives an alert that a network device vendor, which is widely used in the enterprise,

has been banned by the government.

Which of the following will the company's general counsel most likely be concerned with during a

hardware refresh of these devices?

Options

Q: 5

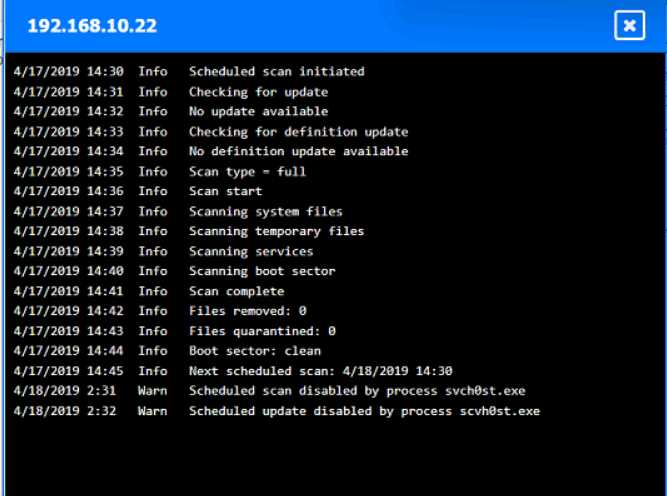

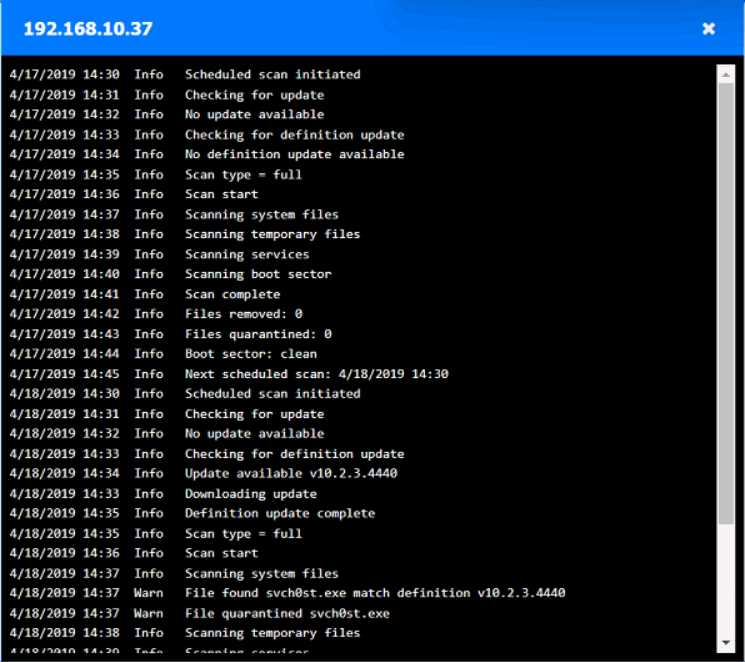

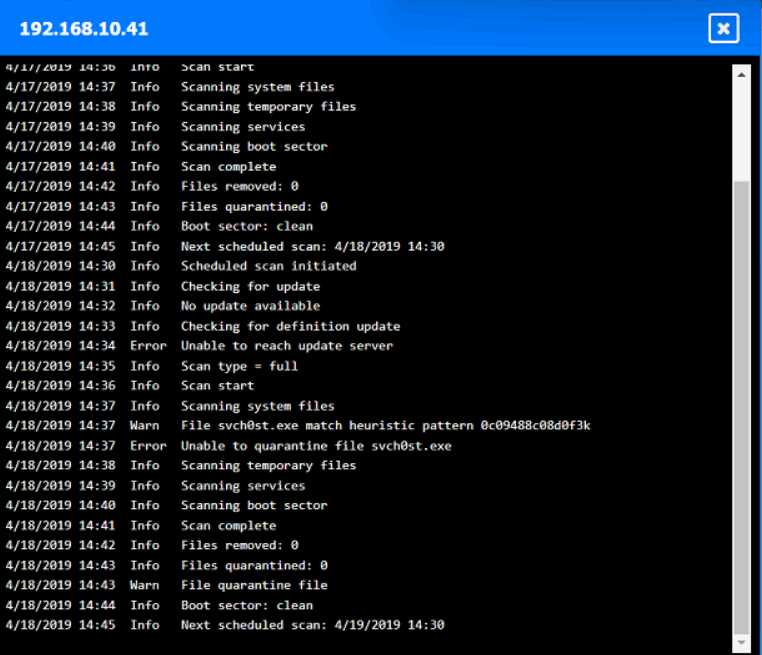

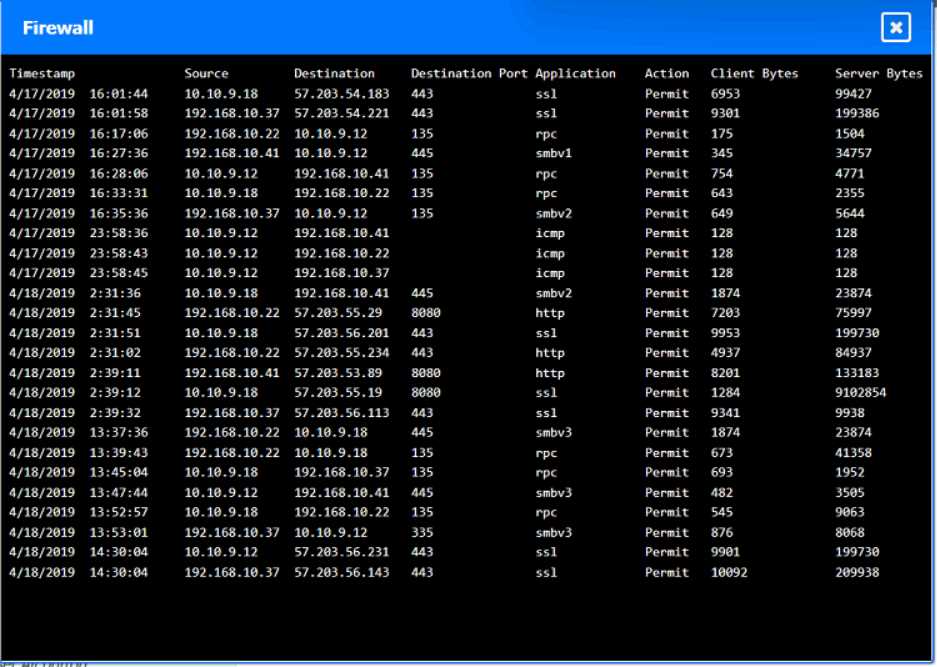

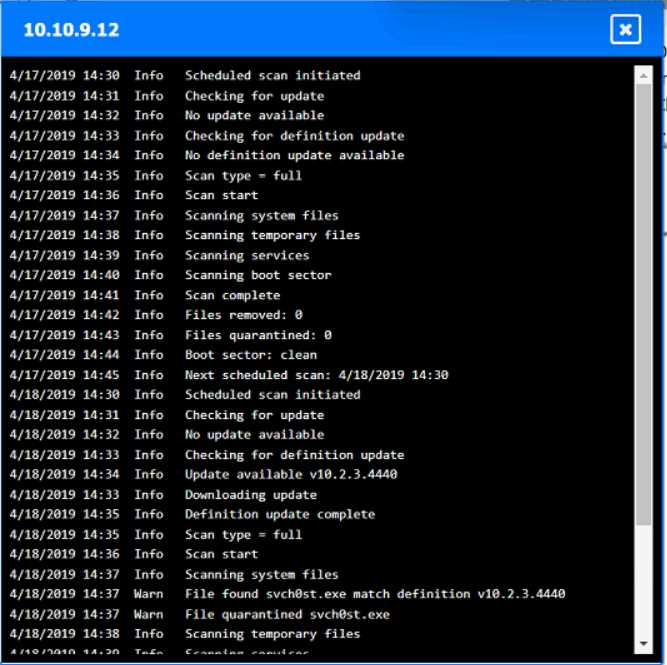

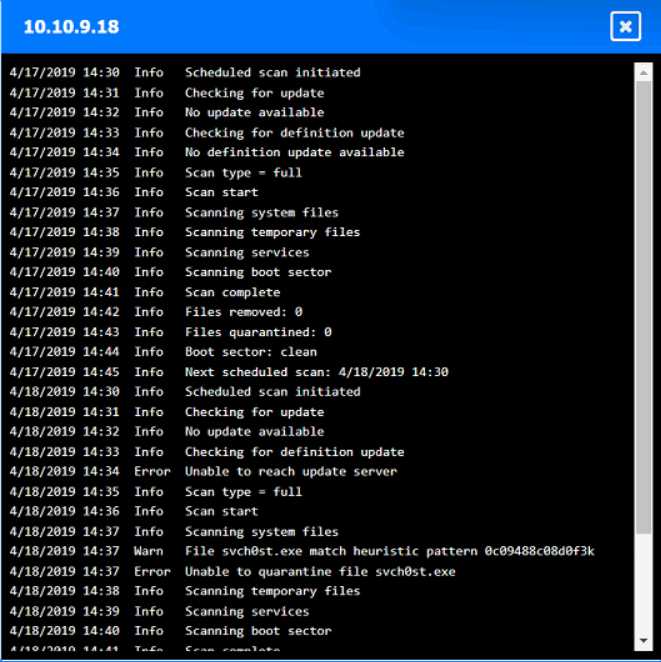

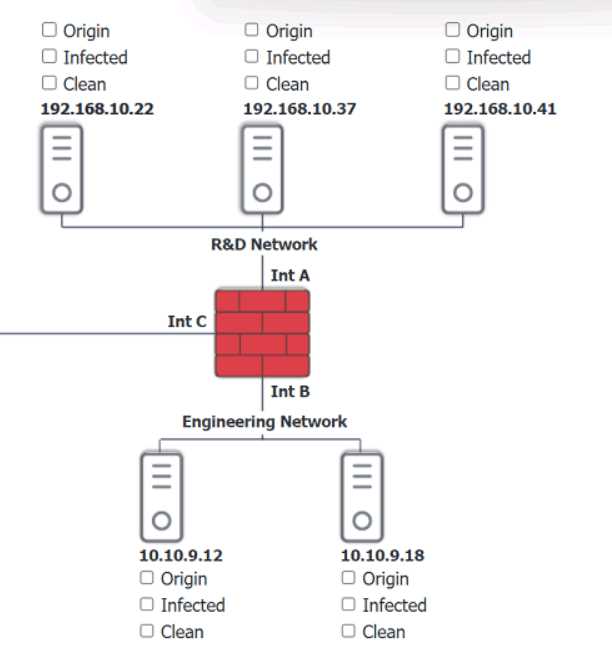

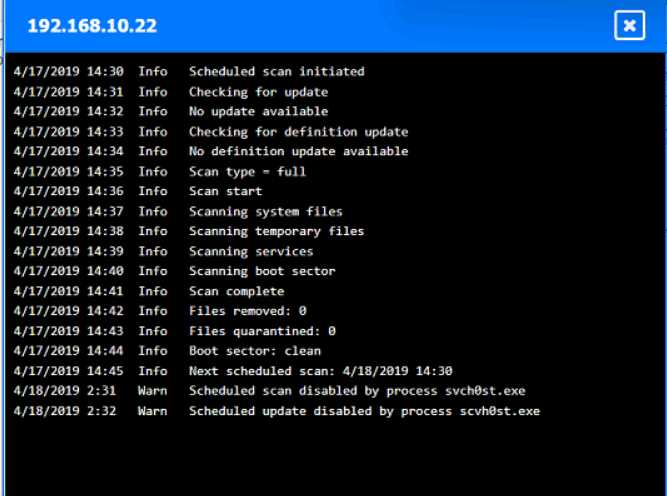

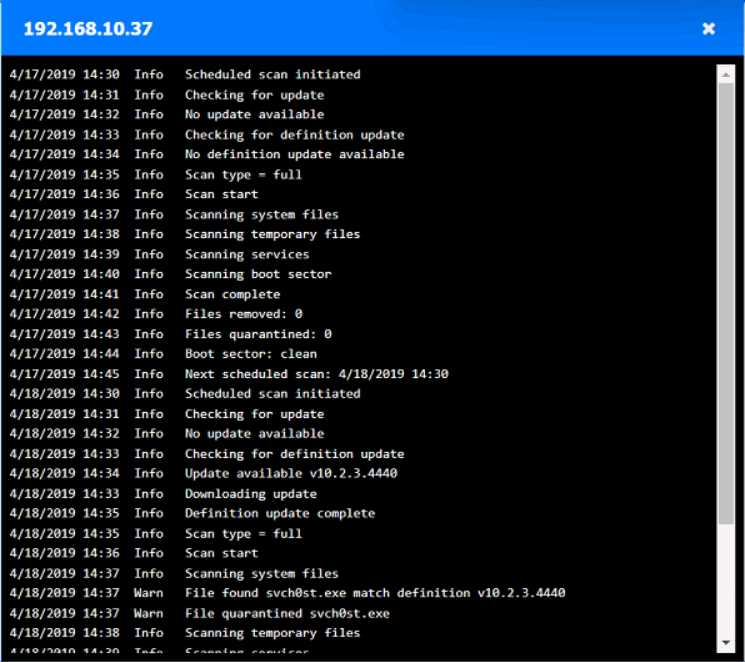

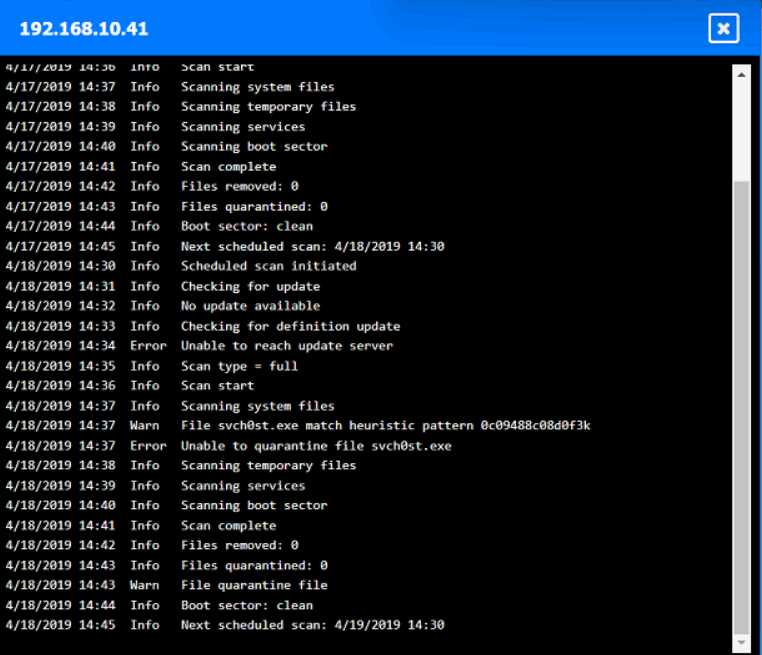

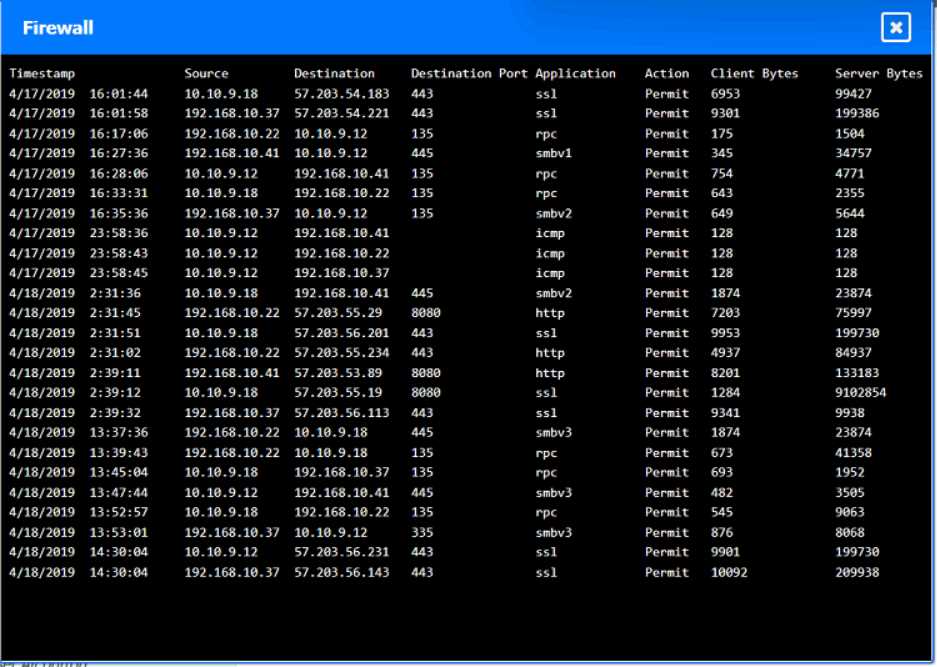

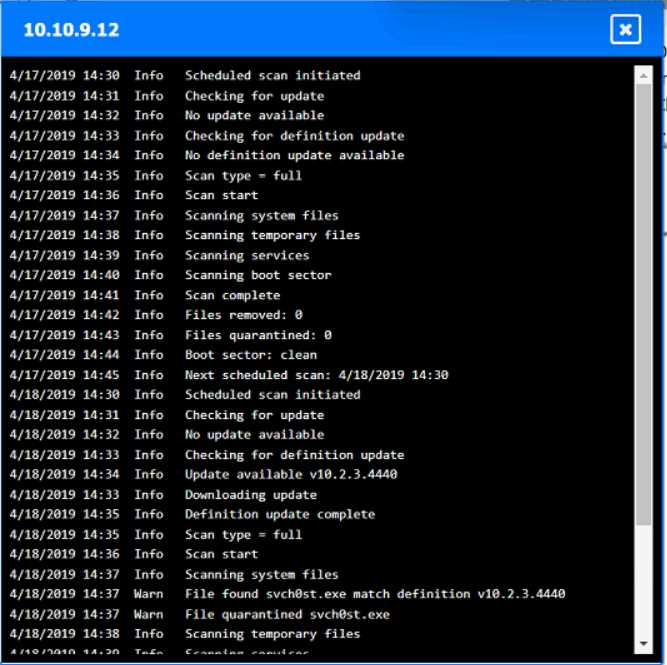

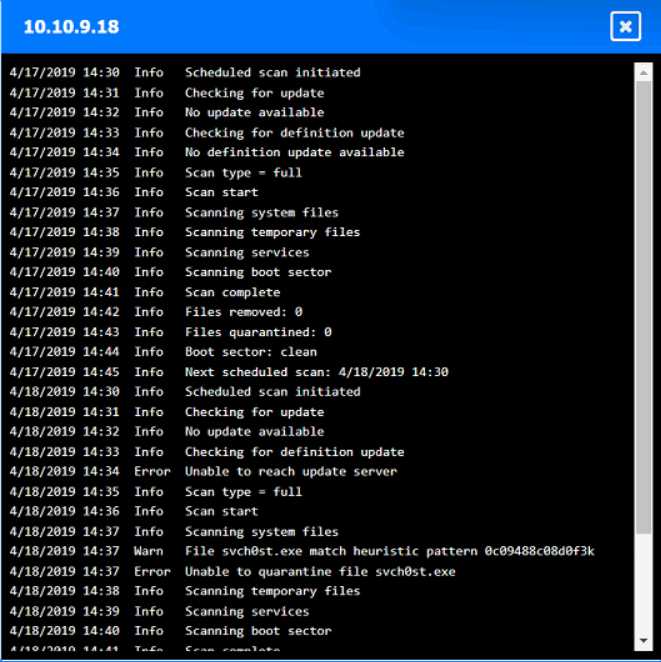

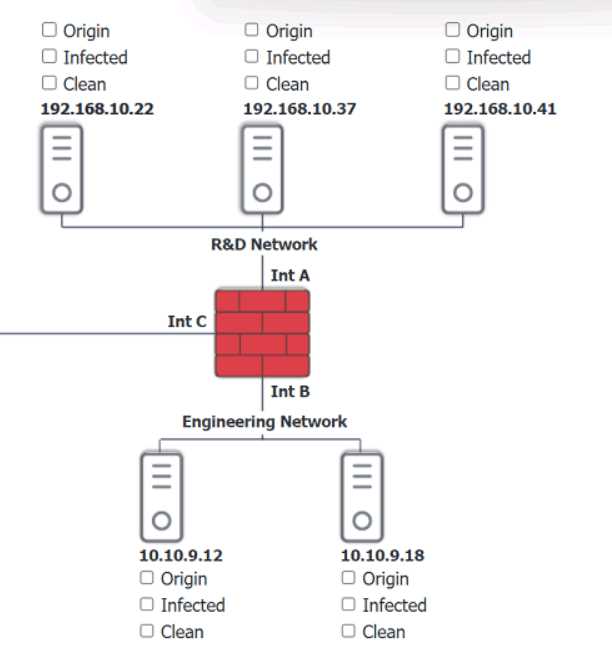

HOTSPOT

You are security administrator investigating a potential infection on a network.

Click on each host and firewall. Review all logs to determine which host originated the Infecton and

then deny each remaining hosts clean or infected.

Your Answer

Q: 6

A network manager wants to protect the company's VPN by implementing multifactor

authentication that uses:

. Something you know

. Something you have

. Something you are

Which of the following would accomplish the manager's goal?

Options

Q: 7

Which of the following is best used to detect fraud by assigning employees to different roles?

Options

Q: 8

Which of the following is a common source of unintentional corporate credential leakage in cloud

environments?

Options

Q: 9

Which of the following environments utilizes a subset of customer data and is most likely to be used

to assess the impacts of major system upgrades and demonstrate system features?

Options

Q: 10

Which of the following is an example of memory injection?

Options

Question 1 of 10