Q: 6

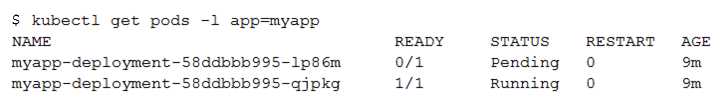

You create a Deployment with 2 replicas in a Google Kubernetes Engine cluster that has a single

preemptible node pool. After a few minutes, you use kubectl to examine the status of your Pod and

observe that one of them is still in Pending status:

What is the most likely cause?

What is the most likely cause?

What is the most likely cause?

What is the most likely cause?Options

Discussion

Its B, unless they specifically mention preemption just happened. Resource shortage hits first in these cases with a single node pool.

B , unless the question clearly called out a recent preemption event. In most cases, with just one node and two pods, resource constraints hit first and cause Pending. If cluster had autoscaling or multiple nodes, then maybe D. Others agree?

B or D? Pretty sure B is right since resource constraints are the common trap here, but not 100%.

B , official docs and GCP practice exams both hit on resource limits as the usual cause for pending pods.

B tbh, it's almost always a resource issue when you see Pods stuck in Pending and only one node's available. If the first pod grabs most of the resources, the second can't fit. Anyone see otherwise for GKE with preemptibles?

B makes sense to me since a single preemptible node pool can’t handle both pods if there aren’t enough resources. This is common when you run multiple replicas and hit node limits. Pretty sure I’m right, but let me know if anyone sees it differently.

Probably B, resource constraints on the single node would keep one pod in Pending. Makes sense for this setup.

Seen similar on practice exams and the GCP docs, usually B for this kind of pod Pending issue.

It's B. Had something like this in a mock before. With only one preemptible node pool and two replicas, once the first pod is running, sometimes there's not enough resources left for the second pod if the node is tight on CPU/memory. Preemptibles don't autoscale by default. Pretty sure that's what's going on here-open to pushback if I missed something.

Be respectful. No spam.