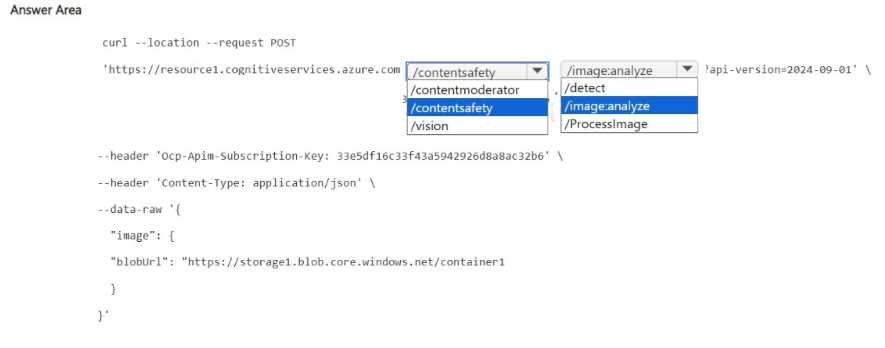

HOTSPOT You have an Azure subscription that contains an Azure Al Content Safety resource named Resource1 and a storage account named storage1. You create a blob container named container1 and upload a sample set of image files to container1. You need to validate whether Resource1 can identify images that contain potential violence. How should you complete the cURL command? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.

contentsafety and /image:analyze. The legacy stuff won't handle the violence check, that's what exam reports say too. Anyone hear differently?contentsafety and /image:analyze fit here, not contentmoderator or /ProcessImage. The latter is from the older API which doesn't fully support violence detection in images. I think some might trip on that legacy trap, but the question wants the current AI Content Safety usage. Let me know if you see it differently.

contentsafety and /image:analyze. Saw similar in practice tests, but not 100% sure since the API changes sometimes. Official docs and recent exam reports seem to back this though. Anyone using a different guide, let me know if you saw otherwise.

contentmoderator and /detect. I thought content moderator was still valid for this kind of check but maybe it's outdated now?