https://kxbjsyuhceggsyvxdkof.supabase.co/storage/v1/object/public/file-images/Microsoft_AB-730/page_20_img_1.jpg

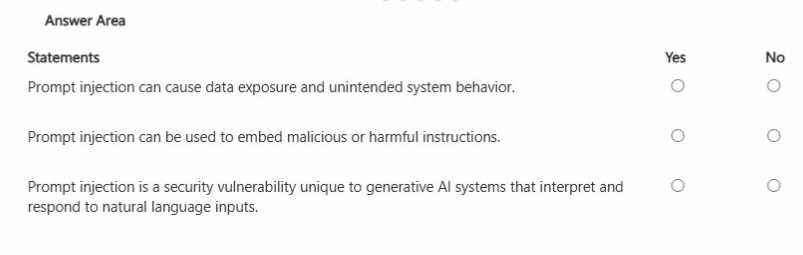

Prompt injection is a generative AI security risk where an attacker inserts instructions (often hidden

in text, documents, webpages, or user inputs) to override or manipulate the assistant’s intended

behavior. This can lead to unintended actions such as ignoring policy controls, producing unsafe

outputs, or attempting to reveal sensitive information. Because generative AI systems follow natural-

language instructions, they can be socially engineered to prioritize malicious content unless

safeguards are in place. This is why prompt injection can cause data exposure (for example,

attempting to extract confidential content from grounded sources) and can also embed harmful

instructions that redirect the model’s behavior. In enterprise settings like Microsoft 365 Copilot,

mitigations include grounding boundaries, permission trimming, content filtering, and instruction

hierarchy (system policies over user instructions). From a business governance perspective, users

should treat untrusted inputs (emails, documents, web text) as potentially hostile and apply least-

privilege access and validation when using AI outputs in decision-making.